Generative AI Infrastructure Services

Your organization doesn’t need another AI model. You need the infrastructure to run AI reliably in production.The Infrastructure That Makes AI Actually Work

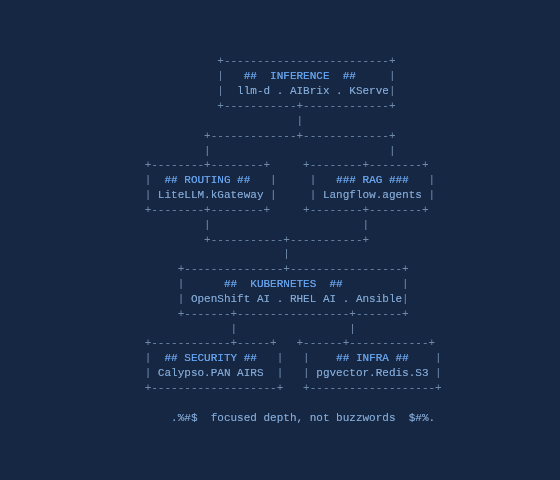

WorldTech IT’s Generative AI Infrastructure practice focuses on the foundational pieces that make AI applications work: inference serving, model routing, vector databases, caching layers, and the Kubernetes orchestration that ties it together. We don’t train models. We build the infrastructure that lets your teams consume AI without reinventing the wheel.

Here’s what most AI vendors won’t tell you: AI infrastructure is still infrastructure. The Postgres database backing your vector search is the same Postgres you’ve run for years. The Redis cache, the S3 storage, the Kubernetes orchestration, the networking. It’s all the same foundational technology. What’s new is the workload, not the fundamentals. Our team has spent years mastering Linux, networking, Kubernetes, and the infrastructure that runs enterprise systems. We’re applying that expertise to make AI operationally solid from day one, so that as models evolve and the ecosystem matures, your infrastructure doesn’t need to be rebuilt.

What We Focus On

We’re not trying to cover every tool in the AI ecosystem. We go deep on a focused set of infrastructure components that enterprises actually need to run inference workloads in production.

Inference & Model Serving

Running inference at scale requires more than spinning up a container. We deploy distributed inference infrastructure that handles the hard problems: routing, caching, scaling, and failover.

- llm-d: Kubernetes-native distributed inference with prefill/decode disaggregation and KV cache-aware routing for large models

- AIBrix: High-density LoRA management and LLM-aware autoscaling from the vLLM project

- KServe: Standardized model serving on Kubernetes (CNCF)

Model Routing & Gateways

Most enterprises need to route between multiple models: self-hosted, cloud APIs, different providers. We architect the routing layer so you can swap models without touching application code.

- LiteLLM: Unified API proxy across OpenAI, Anthropic, Azure, Bedrock, and self-hosted models

- kGateway: Envoy-based Gateway API implementation with MCP and A2A protocol support for agentic workloads

The Infrastructure Everyone Forgets

AI applications don’t run in isolation. They need the same foundational infrastructure that every serious application needs, and most AI projects underestimate this until they hit production.

| Component | What We Deploy |

| Vector Database | PostgreSQL with pgvector. Semantic search and RAG without another database to manage. |

| Caching | Redis for semantic caching, session state, and response optimization |

| Object Storage | S3-compatible storage for model artifacts and document corpora |

| Max-GHz CPUs, deep cache | Arista datacenter networking, F5 for large-scale ingress |

| Networking | Flow tables stay local; no remote-memory stalls. |

| Hardware | Dell servers with GPU configurations for inference workloads |

RAG & Agent Infrastructure

Most real-world AI applications are RAG pipelines or agents, not raw model calls. We deploy the infrastructure to build and run these architectures reliably.

- Langflow: Visual development platform for RAG pipelines and AI agents

- We implement deployment patterns with proper observability, monitoring, and guardrails.

-

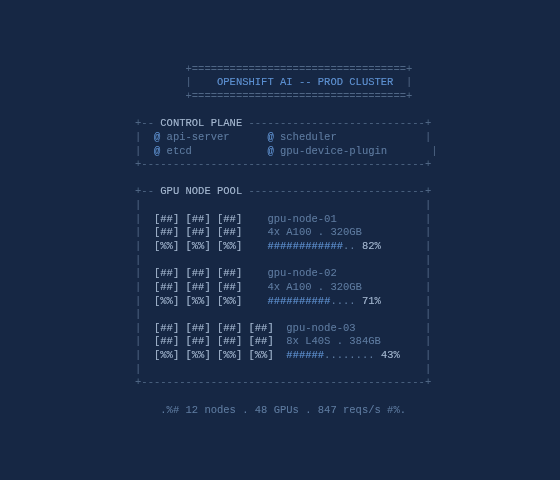

Kubernetes & Platform

OpenShift is our preferred enterprise Kubernetes platform. We’re a Red Hat partner with deep expertise across OpenShift, RHEL, and Ansible. But we understand GenAI sometimes requires flexibility. If NVIDIA’s reference stack on Ubuntu is what your use case demands, we’ll build that instead.

- OpenShift & OpenShift AI: Enterprise Kubernetes with Red Hat’s integrated AI platform. vLLM-based model serving, pipelines, and the enterprise support that procurement teams require.

- RHEL AI: On-premises inference with Granite models and InstructLab for model customization on the RHEL platform you already know

- Ansible Automation: Open source AI tooling is powerful but can be a management headache at scale. We use Ansible to automate deployment, configuration, and lifecycle management across your AI infrastructure.

- We handle GPU scheduling, node pools, and resource management optimized for inference workloads.

- We build hybrid architectures: on-prem for sensitive workloads, cloud for burst capacity.

Security Integration

AI workloads need security like any other workload. We bring in the right fit from our Palo Alto and F5 practices, CSP-native options, or open source tools depending on your requirements.

- F5 Calypso: AI Guardrails and Red Team capabilities that run on-prem, private cloud, public cloud, or SaaS. Our go-to when deployment flexibility matters.

- Palo Alto AIRS: Cloud-delivered AI security with native integrations into enterprise SaaS platforms like Microsoft Copilot and Salesforce Agentforce.

- CSP & Open Source: Azure AI Content Safety, AWS Bedrock Guardrails, Microsoft Presidio for PII detection, and other options based on your environment.

- We architect security into your gateway layer. Runtime protection without additional latency hops.

How We Engage

Assessment & Architecture

We assess your environment, use cases, and constraints to deliver a roadmap that fits your organization. Not a generic reference architecture.

Proof of Concept

We build a working POC with your data and models so you can validate the approach before committing to full implementation.

Implementation

Full deployment with documentation, runbooks, and integration with your change control processes. We make sure your team can operate it. We don’t hand you a working system and disappear.

Managed Services

Ongoing support with the same US-based engineering team that built it.

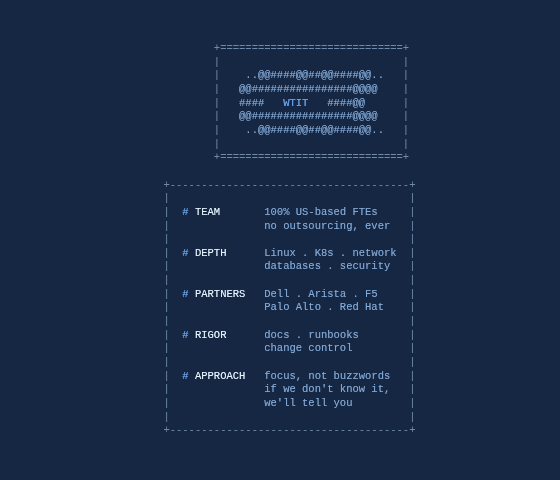

Why WorldTech IT

- Infrastructure Veterans: Linux, networking, Kubernetes, and database experts who’ve been operationalizing complex systems for years. AI is a new workload, but solid infrastructure is what we do.

- Focus, Not Buzzwords: We go deep on specific tools rather than claiming expertise in everything. If we don’t know it, we’ll tell you.

- Full-Stack Integration: Dell hardware, Arista networking, F5 and Palo Alto security, Red Hat platforms. We integrate across the stack because we have practices in each.

- Documentation & Process: Every deployment includes full documentation, runbooks, and change control integration. The same rigor we bring to F5 and Palo Alto work.

- US-Based Engineering: All work performed by full-time WorldTech IT employees. We don’t outsource.